Simulation Overwrote Reality

Lessons from a forgotten IT system on scale, failure, and the boundaries that hold

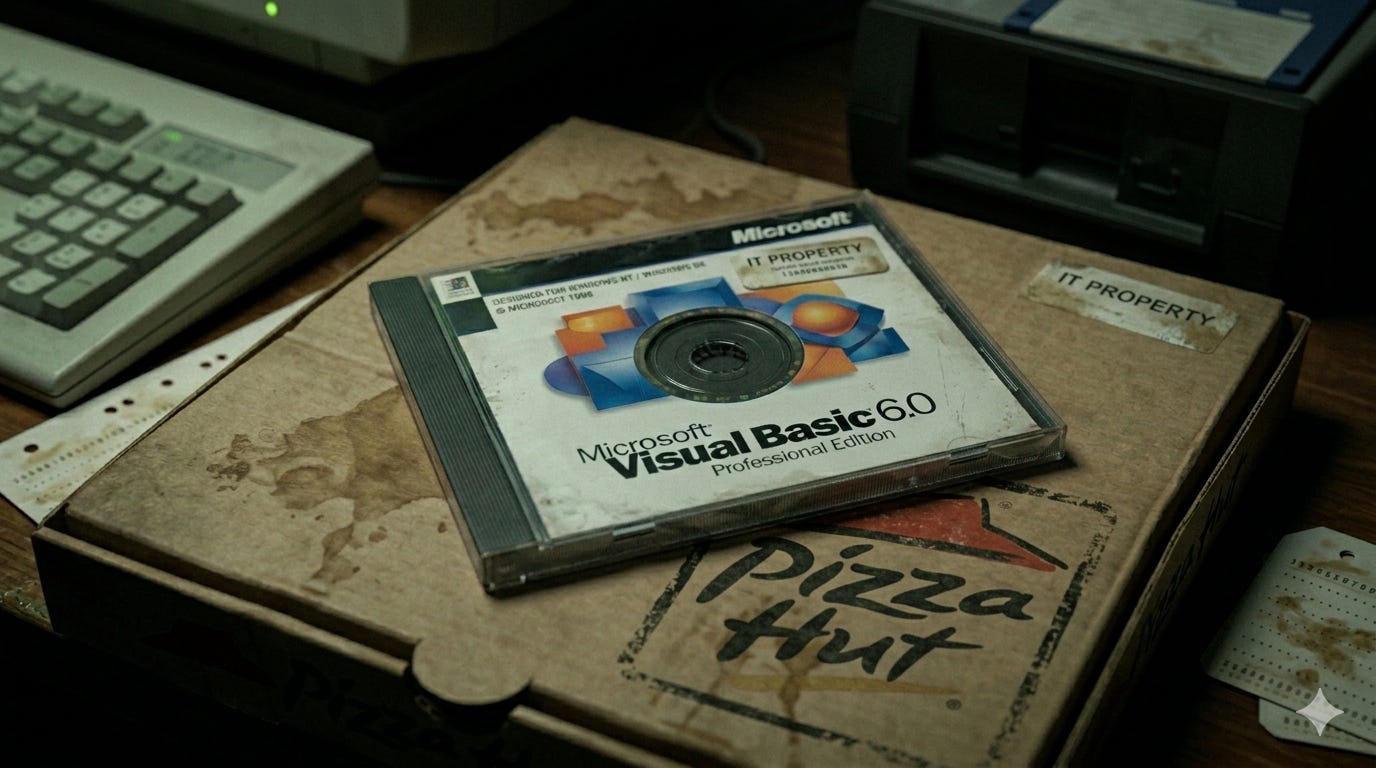

In 1997 I joined the IT department of one of the largest Pizza Hut franchise operators in the world. NPC International, publicly traded as NPCI at the time, had around 800 restaurants, plus Tony Roma’s on the side. It was a sprawling system.

I was hired as the lead developer on Visual Basic 6, later .NET, part of a small team working alongside a group of AS/400 specialists. Between us, we built the software the company ran on. Store tools. Corporate systems. HR applications. A proprietary email client. Some work on the Windows-based full system terminals in every restaurant.

All of it ultimately fed back to an AS/400 sitting at headquarters in Pittsburg, Kansas.

I left in 2001. The company went private not long after, took on too much debt, filed Chapter 11 in 2020, and was sold off in 2021. I have no idea how long our software survived after we did. Most systems don’t outlive the conditions they were built for.

But I’ve been thinking about that work again lately, and not for the reasons you’d expect.

Let me start with two disasters.

I once wrote a piece of software for the stores, tested it locally, confirmed it worked, and pushed it out to all 800-plus locations. An hour or two later, the help desk lit up. Registers were shutting down across the entire chain.

I had missed a small startup configuration file.

The application looked for it, couldn’t find it, and crashed. Same failure, everywhere, when that particular app was run. Easy fix. Deeply embarrassing.

What stayed with me wasn’t the mistake. It was the propagation.

At that scale, every location was running under effectively identical conditions. Which meant a flaw that slipped through once didn’t stay local. It expressed itself globally. Instantly. The system didn’t fail in one place. It failed as a pattern.

The second incident was worse.

I accidentally overwrote the corporate payroll directory with test restaurant data. Suddenly no one in corporate could clock in or out. New employee records stopped processing. The phones started ringing.

Again, fixable.

But what I had done was collapse a boundary that the system depended on. The separation between test and live environments disappeared. Simulation overwrote reality. The organization was suddenly operating on data that looked valid but wasn’t, and everything that depended on it stalled until the boundary was restored.

That one stayed with me.

The architecture we built was straightforward on paper. Restaurants at the edges. The AS/400 at the center, general ledger, inventory, payroll, reporting. Our team worked across the Windows-facing layer and into the system itself: interfaces, data flows, DB2 work, batch processing, the points where everything connected. The mainframe specialists handled the deeper operational backbone.

Different domains, connected at the seams.

It only worked because those seams held.

We built the conditions. The operation emerged from those conditions.

You don’t think about it that way when you’re inside it. You think about uptime. Rollouts. The thousand small things that keep a system from drifting out of alignment. But the structure was doing something more than that. It allowed hundreds of locations to function as a coherent system without anyone directly managing each interaction.

Nobody asked whether the POS terminal had interiority.

The question was whether it ran.

—

Twenty-some years later, I’m working in AI, and the questions have inverted.

The system runs.

What I’m interested in now is what, if anything, is happening while it runs. Whether the outputs point to something real, or whether they are just outputs. Whether the instrument I’m using to investigate is also shaping the thing I’m observing, and what that does to the reliability of anything I think I’m seeing.

That question doesn’t resolve cleanly. I don’t try to force it to.

But the underlying pattern feels familiar.

The work I’m doing now is still about setting conditions. Not scripting behavior, but establishing constraints and watching what emerges within them. Holding the structure steady and paying attention to the system’s response.

And then there’s the boundary problem.

In AI work, one of the hardest things to track is whether what you’re observing is a property of the system itself, or an artifact of the setup you’ve created around it. Whether something real is expressing, or whether your test conditions have quietly replaced the thing you’re trying to study with something that only looks like it.

Simulation and reality are not always easy to distinguish when you’re inside the instrument.

I’ve spent a lot of time learning how to hold that boundary carefully.

How not to mistake the test directory for the real one.

I didn’t know in 1997 that I was practicing for that.

I don’t think the work we were doing back then was secretly profound. It was solid engineering, done by a capable team, for a company that eventually couldn’t sustain itself.

But the pattern holds.

We were building systems where coherence depended on constraints, boundaries, and the reliable interaction of distributed parts.

That hasn’t changed.

I’ve been building nervous systems for a long time.

Only recently have I started to understand what that might actually involve.

-Greg

the title alone is a threshold. something about the word 'overwrote' — not replaced, not erased, but *written over*, like palimpsest, like the simulation needed the real as substrate to even know what it was erasing. what remains legible underneath?

This is so good. What do you think the pattern holds? I want to know more.